Starting from first semester of 1L year in law school, it’s been drilled into our heads that we must rely on a “citator” like LexisNexis’ Shepard’s, Westlaw’s Keycite, Bloomberg Law’s BCite, or Casetext’s SmartCite. We’ve been told that, if we don’t, we risk citing to “bad law” — a case that’s been overturned, reversed, or undermined by subsequent authority.

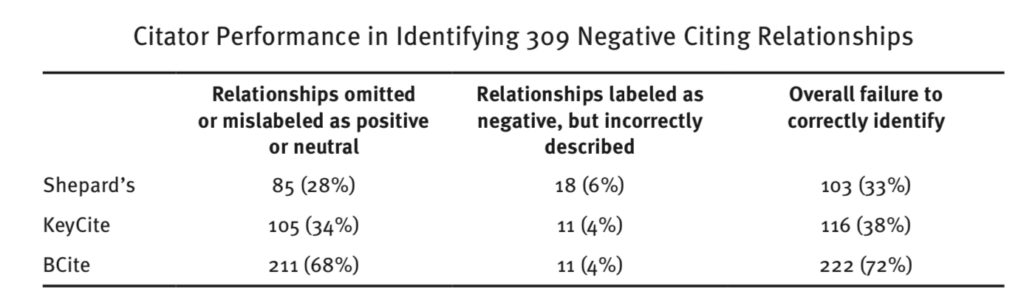

But new research shows that that over-reliance on a simplistic flag system is dangerous. A recent article by Paul Hellyer, a law librarian at William & Mary Law School, demonstrates that Shepard’s, KeyCite, and BCite are wrong a lot.

The article, Evaluating Shepard’s, KeyCite, and Bcite for Case Validation Accuracy, is “the largest statistical comparison study of citator performance for case validation.” The shocking takeaway of this study: in only 15% of the time did all three systems analyzed agree that a particular case has had negative treatment applied; “in 85% of these citing relationships, the three citators do not agree on whether there was negative treatment.” They can’t all be right — at least one system wrongly identified negative treatment 85% of the time. Yikes.

As Kristina Niedringhaus, the Associate Dean of Library and Information Services and an Associate Professor at Georgia State University College of Law, said in her review of the study: the results of the study are “distressing.”

To understand the results of the study, it’s important to understand why systems like Shepard’s, KeyCite, or BCite might miss negative treatment. Running a legal research company has given me insight into this issue.

The primary reason legacy research systems make these mistakes, I believe, is that they use largely editor-driven — i.e., human-driven — processes. Humans make mistakes.

It’s part of why we at Casetext rely on artificial intelligence to do some of the heavy lifting for SmartCite: machines are getting increasingly good at automating this process, and are less error-prone than humans.

We also rely on intelligence extracted from the text of legal opinions themselves. For example, Casetext SmartCite has yellow flags that will note where a case has been cited with a contradictory citation signal (but see, but cf., contra, etc.) We do this because attorneys want to see how judges cite the case they’re about to rely on. And because we’re leveraging the collective wisdom already contained in the common law, it’s much more likely to be useful information.

All that said, humans are a part of our process too. For example, attorneys hand-check the results of the machine learning algorithms. Although our internal analyses of our performance has us doing a lot better than those studied by Hellyer, I am certain we are not without error.

So even the most advanced, technology-driven system can make mistakes. What are you to do if you want to avoid citing bad law?

Given that none of these systems are perfect and relying on the presence or absence of a “red flag” may not be enough, how can you avoid citing to bad law?

There’s one simple tip that I’ve used since law school that almost always works:

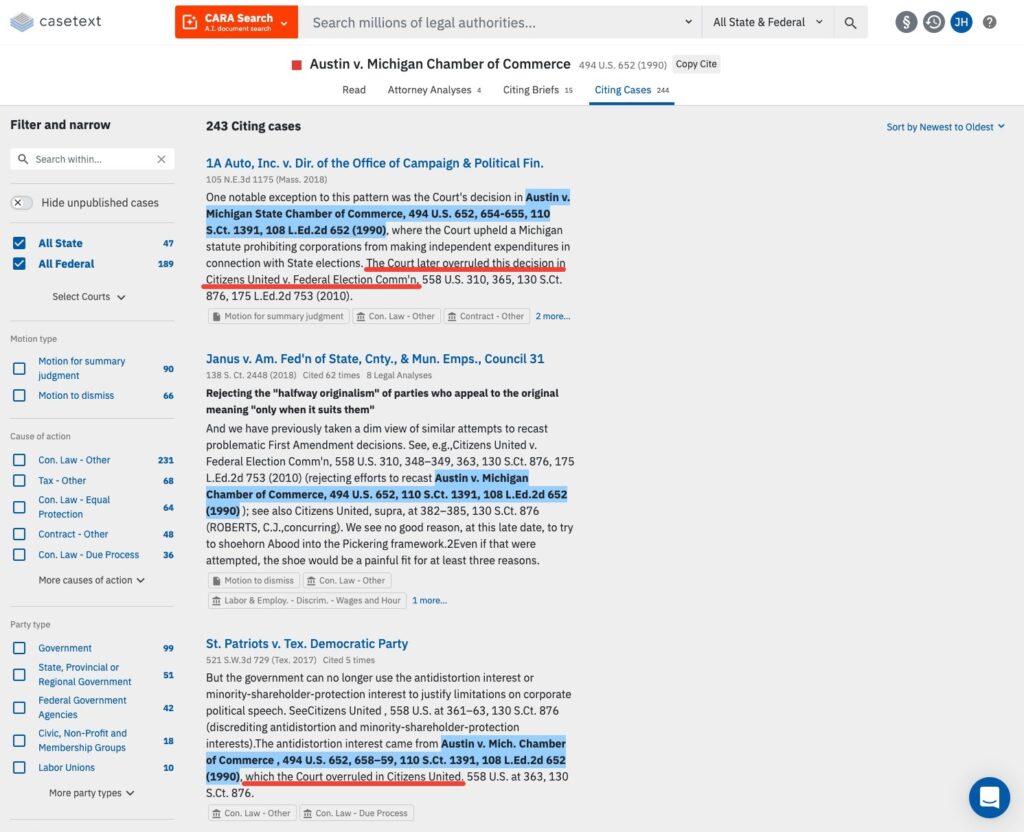

Most legal research systems make this trivially easy. On Casetext, you simply click the “Citing Cases” tab and then “Sort by Newest to Oldest.”

Why do this? A few reasons. First, if a case has been overruled, that’s almost always made obvious by how recent cases talk about it. Or it won’t be talked about at all recently, which is itself a potential signal about the case’s continued validity (although that does not conclusively say anything one way or another).

For example, look at how Austin v. Michigan Chamber of Commerce has been discussed subsequent to its overruling by Citizens United. More recent courts make clear that the case has been overruled as they discuss it:

Second, even if a case has a “red flag” on it, the case may still be good law for some propositions. For example, McGore v. Wrigglesworth, an opinion from the Sixth Circuit Court of Appeals, has been overruled in part. But it is still being cited up to this day, multiple times a week, for propositions for which it is still good law. This indicates that it is still potentially citable.

Using this simple method can help you make sure that you avoid mistakes. Instead of relying on a system’s flag system, you will instead see for yourself in just a few moments how a case has been discussed recently. That signal may be as important, if not moreso, than anything else.

All this said, citator systems like Shepard’s, KeyCite, or Casetext’s SmartCite let you see at a glance a lot of information, will save you a lot of time, and still have a lot of value. If this is the sort of stuff that interests you, I encourage you to check out what we’ve done with SmartCite — which goes far beyond the legacy publishing companies and gives a level of intelligence and information you’d expect from a modern legal research platform.

Rapidly draft common legal letters and emails.

How this skill works

Specify the recipient, topic, and tone of the correspondence you want.

CoCounsel will produce a draft.

Chat back and forth with CoCounsel to edit the draft.

Get answers to your research questions, with explanations and supporting sources.

How this skill works

Enter a question or issue, along with relevant facts such as jurisdiction, area of law, etc.

CoCounsel will retrieve relevant legal resources and provide an answer with explanation and supporting sources.

Behind the scenes, Conduct Research generates multiple queries using keyword search, terms and connectors, boolean, and Parallel Search to identify the on-point case law, statutes, and regulations, reads and analyzes the search results, and outputs a summary of its findings (i.e. an answer to the question), along with the supporting sources and applicable excerpts.

Get answers to your research questions, with explanations and supporting sources.

How this skill works

Enter a question or issue, along with relevant facts such as jurisdiction, area of law, etc.

CoCounsel will retrieve relevant legal resources and provide an answer with explanation and supporting sources.

Behind the scenes, Conduct Research generates multiple queries using keyword search, terms and connectors, boolean, and Parallel Search to identify the on-point case law, statutes, and regulations, reads and analyzes the search results, and outputs a summary of its findings (i.e. an answer to the question), along with the supporting sources and applicable excerpts.

Get a thorough deposition outline in no time, just by describing the deponent and what’s at issue.

How this skill works

Describe the deponent and what’s at issue in the case, and CoCounsel identifies multiple highly relevant topics to address in the deposition and drafts questions for each topic.

Refine topics by including specific areas of interest and get a thorough deposition outline.

Ask questions of contracts that are analyzed in a line-by-line review

How this skill works

Allows the user to upload a set of contracts and a set of questions

This skill will provide an answer to those questions for each contract, or, if the question is not relevant to the contract, provide that information as well

Upload up to 10 contracts at once

Ask up to 10 questions of each contract

Relevant results will hyperlink to identified passages in the corresponding contract

Get a list of all parts of a set of contracts that don’t comply with a set of policies.

How this skill works

Upload a set of contracts and then describe a policy or set of policies that the contracts should comply with, e.g. "contracts must contain a right to injunctive relief, not merely the right to seek injunctive relief."

CoCounsel will review your contracts and identify any contractual clauses relevant to the policy or policies you specified.

If there is any conflict between a contractual clause and a policy you described, CoCounsel will recommend a revised clause that complies with the relevant policy. It will also identify the risks presented by a clause that does not conform to the policy you described.

Get an overview of any document in straightforward, everyday language.

How this skill works

Upload a document–e.g. a legal memorandum, judicial opinion, or contract.

CoCounsel will summarize the document using everyday terminology.

Find all instances of relevant information in a database of documents.

How this skill works

Select a database and describe what you're looking for in detail, such as templates and precedents to use as a starting point for drafting documents, or specific clauses and provisions you'd like to include in new documents you're working on.

CoCounsel identifies and delivers every instance of what you're searching for, citing sources in the database for each instance.

Behind the scenes, CoCounsel generates multiple queries using keyword search, terms and connectors, boolean, and Parallel Search to identifiy the on-point passages from every document in the database, reads and analyzes the search results, and outputs a summary of its findings (i.e. an answer to the question), citing applicable excerpts in specific documents.

Get a list of all parts of a set of contracts that don’t comply with a set of policies.

Ask questions of contracts that are analyzed in a line-by-line review

Get a thorough deposition outline by describing the deponent and what’s at issue.

Get answers to your research questions, with explanations and supporting sources.